Kubernetes: Deprecated APIs aka Introducing Kube-No-Trouble

With Kubernetes 1.16 available for a while and starting to slowly roll out across many managed Kubernetes platforms, you might have heard about API deprecations. While fairly simple to deal with, this change might seriously disrupt your services if left unattended.

API deprecation - what is that?

As Kubernetes feature set evolves, APIs have to evolve too in order to support this change. There are rules in place that aim to guarantee compatibility and stability11. Kubernetes Deprecation Policy document governs how different parts of the system can be made obsolete and removed., and also it does not happen with every release, but eventually, you will have to use the new API version and format as the old one won’t be supported anymore.

Why is this important with the 1.16 release?

A few deprecated APIs have been kept around in the last couple of K8s versions and finally getting completely removed in Kubernetes 1.16 release. Namely following API Groups and versions:

- Deployment -

extensions/v1beta1,apps/v1beta1andapps/v1beta2 - NetworkPolicy -

extensions/v1beta1 - PodSecurityPolicy -

extensions/v1beta1 - DaemonSet -

extensions/v1beta1andapps/v1beta2 - StatefulSet -

apps/v1beta1andapps/v1beta2 - ReplicaSet -

extensions/v1beta1,apps/v1beta1andapps/v1beta2

If you try to create a resource using one of these with 1.16, the operation will simply fail.

How to check if I am affected?

You can manually go through all your manifests, but that can be fairly time-consuming, it’s easy to miss some and might be highly unpractical if you have multiple teams deploying to the cluster, or simply don’t have all the current manifest at one place. And this is where Kube-No-Trouble aka kubent comes to help.

What’s the catch?

The information about what API version was used to create given resource is normally not accessible, as the resource is always converted to and stored in the preferred storage version internally. However. if you’re using kubectl or Helm to deploy your resources, the original manifest is stored inside the cluster as well and we can leverage that22. This is either in the form of kubectl.kubernetes.io/last-applied-configuration annotation in case of kubectl, or as a ConfigMap or Secret in case of Helm..

Sounds good, how do I use it?

The simplest way is to install is:

| |

This will install the latest version of kubent to /usr/local/bin33. If you, same as me, don’t trust random scripts someone posts in blog posts, download latest release for your platform, and unpack to wherever you prefer..

Configure kubectl’s current context to point to the cluster you want to check and run the kubent tool:

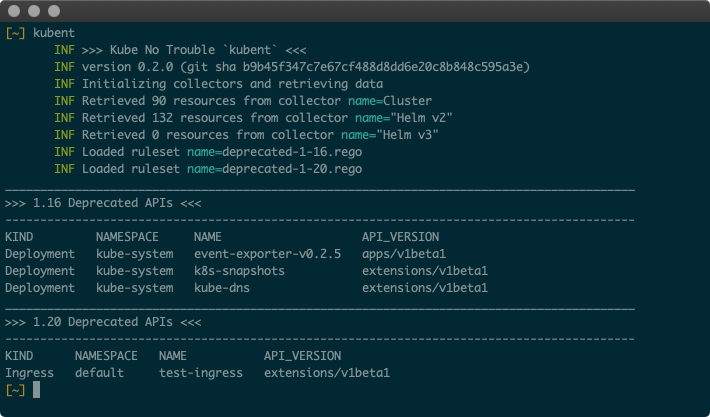

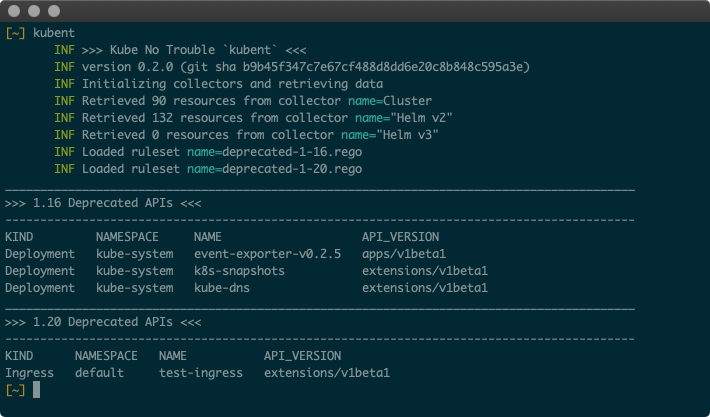

Fig. 1: Sample output of kubent run

Kubent will connect to your cluster, retrieve all resources that might be affected, scan, and print summary of those that are.

You can also use -f json flag to get output in JSON format which is more suitable in case you want to integrate this in your CI/CD pipeline or process the results further. More details on available configuration options are described in the README of doitintl/kube-no-trouble repo.

What should I do with the resources that are detected?

In some cases, it’s as simple as changing the apiVersion in your manifest, but in others the structure might have changed and will need adjusting. Also, be aware that lot of defaults changed between versions44. Nice article about this is David Schweikert’s Kubernetes 1.16 API deprecations and changed defaults, so by only changing the apiVersion and applying the same manifest you would end up with different results. For example StatefulSet’s updateStrategy.type changed from OnDelete to RollingUpdate, resulting in very different behaviour.

Previously used kubectl convert command is now deprecated, and might not convert your resources correctly with respect to the previously mentioned default values.

Possibly the best way is to simply apply the resources (you already have that if you have detected them with kubent) and retrieve the new version from API. This will ensure that the resource will be correctly transformed to the new version. You might have noticed that kubectl is somewhat non-deterministic as to what version it returns. To request a specific API version, use the full form:

| |

Feedback welcome!

So hopefully this will help you to detect and deal with the use of deprecated APIs in your Kubernetes clusters before these could cause you any trouble.

It is still very early for the kubent tool and I would love to hear any comments, suggestions, and if you find it useful. Safe sailing! ⛵⛵⛵